Validating the user desire for detailed shipment tracking

Maersk Go (formerly Twill) is a dedicated branch of worldwide B2B shipment provider Maersk. Their goal is to simplify the complex online and offline processes of international cargo shipping for small businesses.

Discover how and which insights on shipment tracking can be valuable to the core user group. Aiming to decrease support requests around this topic on the long-term.

Maersk Go is dependant on the data pool of Maersk for everything shipment related. This often leads to unavailable and slow information connections. Neither Maersk or Maersk Go currently provide any data related to shipment statuses, besides a high-level overview through milestones and email updates.

I worked as a Product Designer in the Shipment Management team for five months. This team focuses on helping users complete required tasks, make amendments and gain insight in their shipment progress. The approach of this project was determined. The rest of the project was my responsibility from research to UI/UX Design and stakeholder communication.

- Analyse shipment tracking features of competitors, keeping in mind relevancy and feasibility.

- Validate the desire of Maersk's core user group for detailed tracking functionalities through painted door testing.

- Communicate research insights with stakeholders and provide next step recommendations.

- Design a scalable MVP to further validate and potentially build upon in the future.

- Hand-over knowledge and designs for future elaboration.

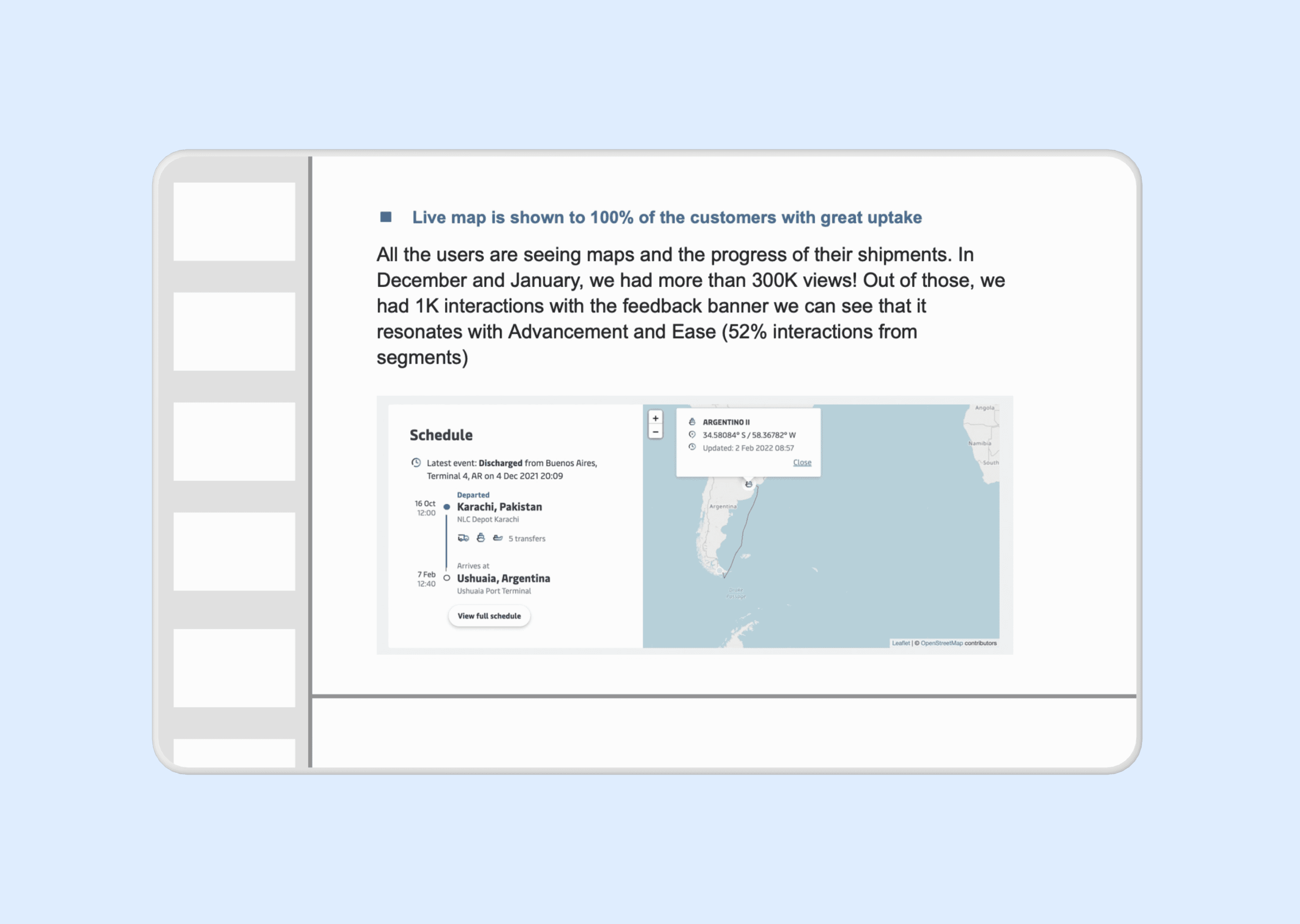

The painted door design resulted in a thousand interactions with the core user group. Validating the desire for tracking features. This led to the release of a live map. Which received over 300.000 views in its first two months.

Research

The hypothesis amongst stakeholders was that tracking insights would be irrelevant to their users. The design team felt the opposite. Specifically because shipment journeys often take weeks or even months. Throughout this process its common for delays to occur and some of these are related to required user actions.

Competitor Analysis

The aim of the competitor analysis was to identify how similar organisations handled shipment tracking. Which I could use as an indication for a user need to persuade stakeholders and to take inspiration for an experiment design.

- Which information do competitors communicate about tracking?

- How do competitors display tracking for active multiple shipments?

- Do competitors draw a relation between tracking and user tasks?

- All competitors use milestones to communicate about the shipment process.

- Some competitors offer a live map that can display multiple shipments in single overview.

- Competitors offer different levels of tracking information. Some display vessel focused tracking, others container focused.

- Information variants contain delay time, schedule updates, both or neither depending on the competitor.

Painted door test

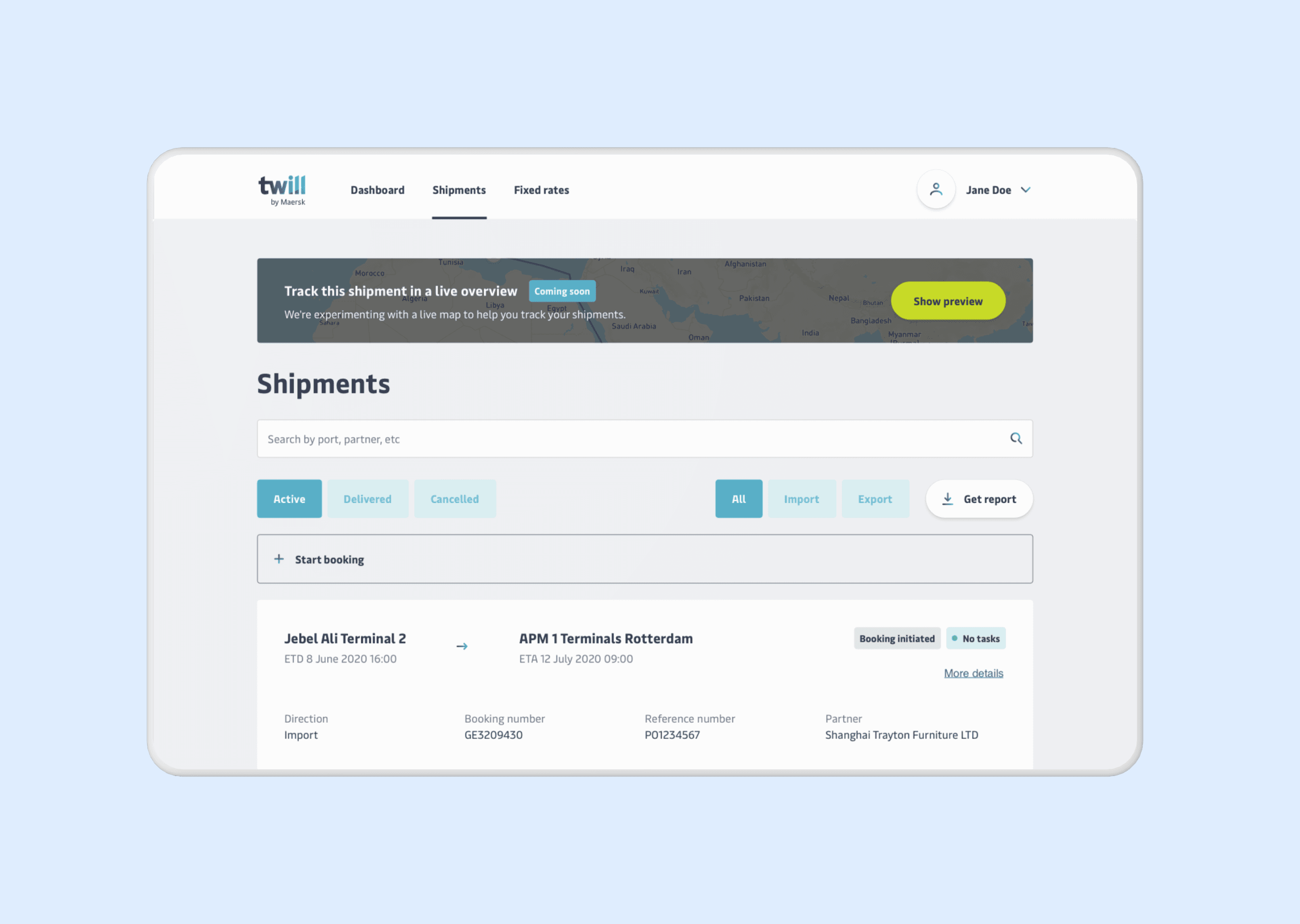

Based on feasibility, available data and stakeholder priorities, we determined our experiment approach together. The most important aspect was that in a previous hackathon developers had attempted to create a live map already. This gave an indication of the effort required to realise a working map experiment. To simplify development and design input, we decided to run a painted door test with a banner opening a survey on-click.

The experiment ran for two weeks. Afterwards, I analysed the results and presented them to stakeholders.

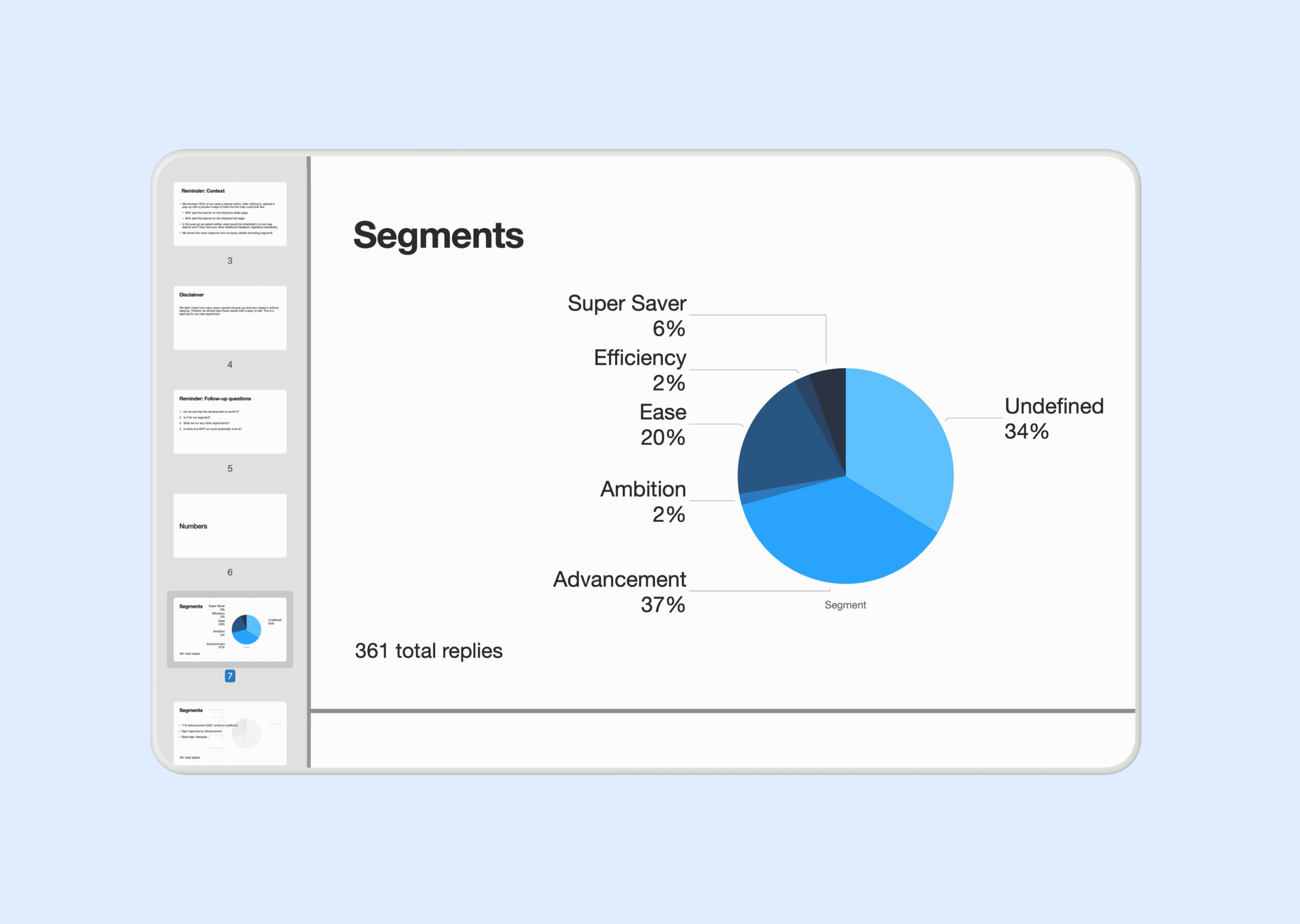

The main user segment had a 33% higher response rate compared to their overall active users. Allowing us to conclude that within this group three times more users were triggered by the banner and responded to the survey. Over all segments, users that responded to the survey were positive.

These insights convinced stakeholders to allow us to design and build a proof of concept to launch for users.

Design

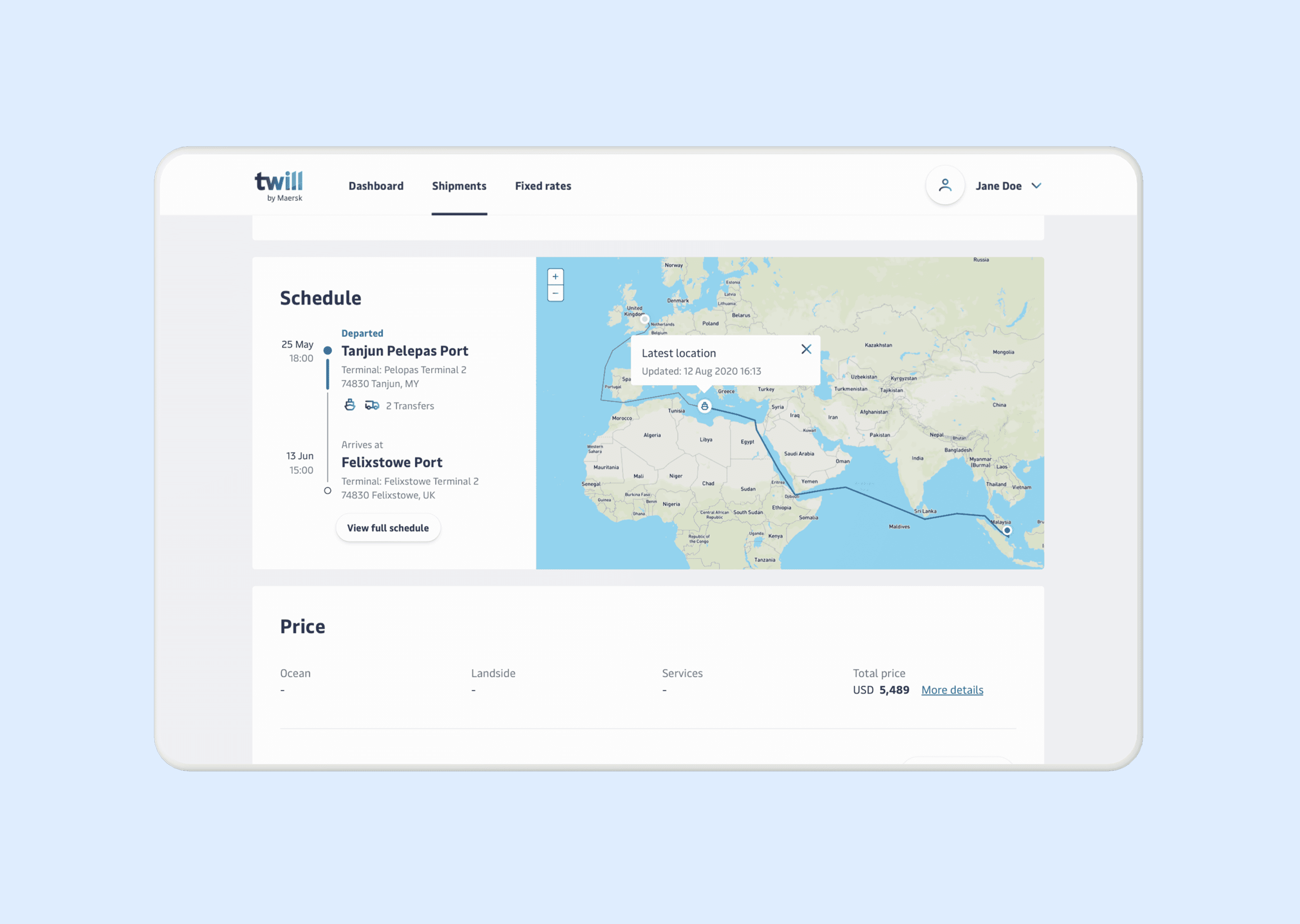

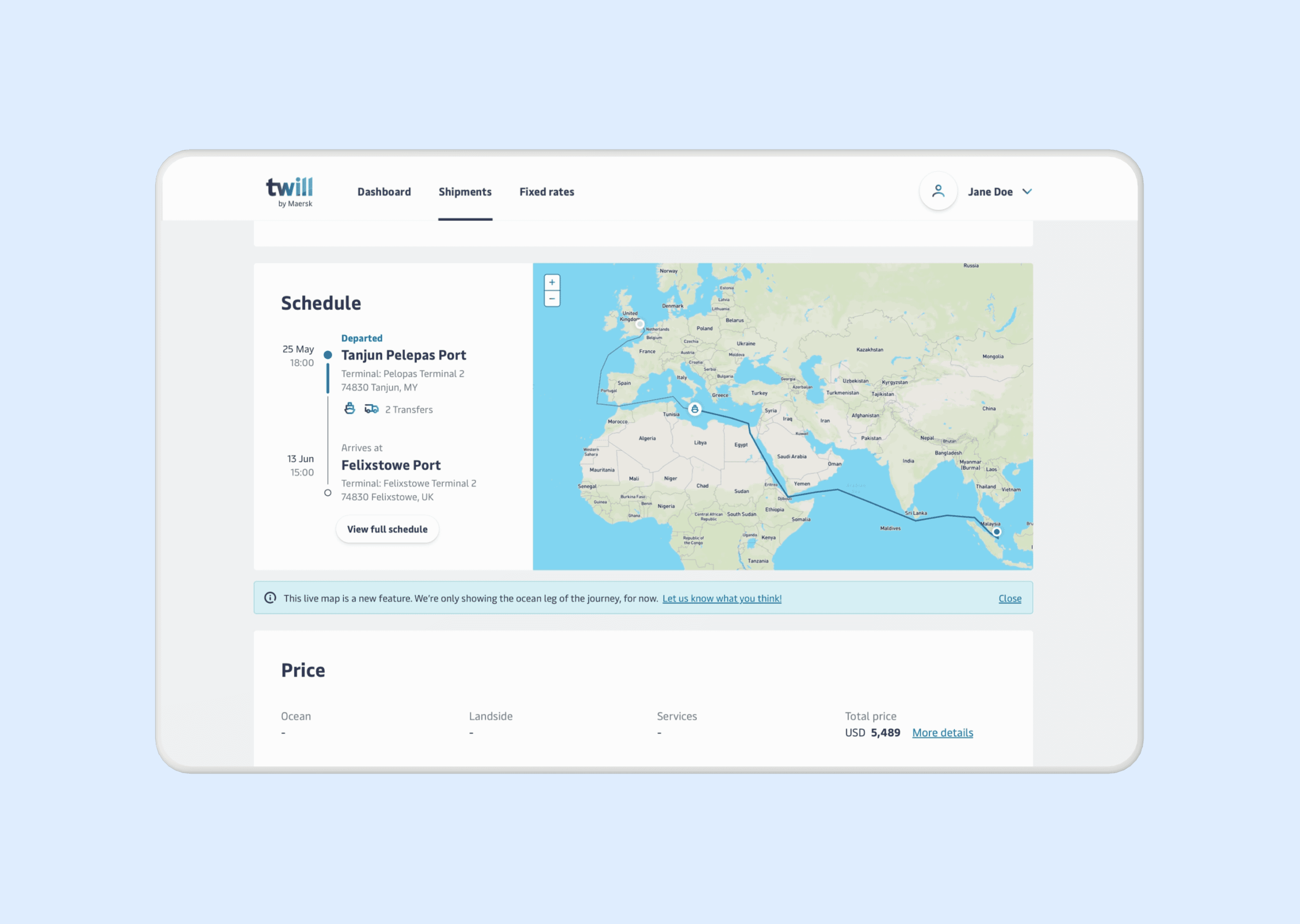

The experiment design focused on having a first live version, to further evaluate how users interacted with the map and learn more about information-depth desires. To remain in scope the tracking solely lived on the shipment detail page, which allows users to follow one shipment at a time.

Exploration

I explored entry-points for the map. At the top of the page in combination with the milestones and next to the schedule component. Since creating an relation between the map and tasks was to complex for this experiment, I moved forward with the placement next to the schedule component.

I chose to display the schedule on the left. Drawing attention first to the overall journey. Which can also contain transportation on trucks. Then help users understand deeper context by viewing the vessel on the map.

I wanted the map to highlight where to focus. So I decided to display it in color, allowing users to quickly distinguish water from land. I included country names to help users identify approximate location. Upon click users can see when the vessel location was last updated. Which in the future would allow to mention updated arrival times and delays.

Validation

From the painted door test to release of the experiment design, the live map was received positively. The validation primarily consisted of quantitative analysis around user interactions, duration of viewing the schedule area and support tickets in relation to tracking. Unfortunately I left Maersk Go after the experiment was released.

However, I stayed in touch with the team and learned that the live map was released to 100% of the users later that year. In December and January it received more than 300.000 views. The uptake of the live map reflects the resonation indicated by the painted door test with the primary user group. The team also continued to build upon the existing design by adding coördinates and detailed cargo statuses. Validating the scalability of my initially created design.

Reflection

This project was incredibly valuable to me as a designer. I discovered what it’s like to join a multidisciplinary team and carry responsibility for a specific area in a product. The product complexity thought me a lot about covering edge cases and different states. In my team there were no visual designers and copywriters, forcing me to learn about those topics as well. Looking back, this was my first step in broadening my expertise from UX to Product Design.

One thing I approach differently now is that in my competitor analysis I only covered other shipment organisations. Nowadays, I include one or two other type of organisations as well. Specifically for inspirational purposes.

During the painted door test I also learned the complexity of metrics. When running the test, we forgot to also track users opening the module but not submitting. Therefore, our data wasn’t displaying the full picture. We did inform stakeholders about this when presenting the insights. But I do know now that this was a very important metric to identify whom only opened the survey or completed it but never pressed submit.